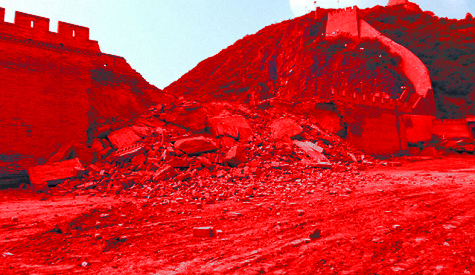

Bypassing the Great Firewall - Obscure Anonymization Protocols

Made i̶n̶ for China

Introduction

So internet censorship has become an increasingly relevant topic in the last year as governments worldwide explore tools for controlling online traffic. These include the highly invasive Chat Control being proposed by the European Union, as well as the UK’s Online Safety Act. With broader discussions around VPN restrictions it feels like the western world’s democracies are on a trajectory for state censorship - not unlike those already deployed in authoritarian states. This blog post aims to review and discuss the protocols used to circumvent state internet control in Iran, China, and Russia.

Academic literature on censorship circumvention, particularly the body of works from GFW Report, USENIX Security, and FOCI (Free and Open Communications on the Internet), provide well-documented case studies on the technical arms race between state-level filtering and circumvention tools.

The Chinese Golden Shield Project, initiated in 1998, remains the most extensive and well-studied example. Its networking subsystem, colloquially known as “the Great Firewall”1, combines deep packet inspection, DNS cache poisoning, IP blacklisting, and active probing to control what foreign content can be accessed from within the Chinese autonomous routing domain. The project operates under the principle that “opening a window for fresh air will inevitably allow some flies in” - a framing that has informed censorship policies across multiple nations.

Despite over two decades of investment and development, circumvention persists.

There is a well documented a cycle: censors develop new detection techniques, protocol designers adapt, and new protocols emerge to address the latest filtering methods. Forget standard VPN solutions (IPSec, OpenVPN, WireGuard), and SOCKS proxies. These are all easily blocked via protocol fingerprinting.

The protocols examined in the below list represent, or at one point ‘represented’, state of the art circumvention. These range from stream cipher wrappers to systems employing post-quantum key exchange. Their technical approaches vary considerably, but all are designed to resist the deep packet inspection, active probing, and traffic analysis techniques documented in peer-reviewed literature2.

The majority of these protocols are well-documented with open-source client implementations such as Xray, V2Ray, Sing-Box, and Mihomo - all written in Go with partial or differing support for various protocol+transport+security combinations.

Protocols and Projects

ShadowSocks

History

One of the oldest protocols on this list that was first released to the public in 2012 by the Chinese developer clowwindy. It started out as a private Python tool shared on GitHub and has evolved into a still commonly used solution for censorship circumvention with multiple platform and language ports.3

The original implementation made use of, now legacy, stream ciphers like AES-CFB & RC4-MD5 and lacked complex handshake mechanisms making it very fast but vulnerable to probing techniques.4 Unfortunately the initial era was short-lived as clowwindy shared that he was contacted by the police and could no longer maintain the project, as such the community forked the project leading to a new era in the protocols development.

The modern protocol makes use of various AEAD (Authenticated Encryption with Associated Data) cipher variants; like AES-GCM, and ChaCha20-Poly1305. These ciphers ensure authenticity, integrity, and confidentiality of traffic. This was a major benefit to the protocol, as previously traffic to ShadowSocks servers could be intercepted and altered in transit to trigger predictable response behavior from target servers to identify them as running ShadowSocks and subsequently block them. AEAD, specifically, introduces a tag MAC to every packet which will fail verification if any tampering to packet data is performed causing a silent connection drop from the server.

The latest rendition (SIP022) enforces even stricter key security requirements, so no 123456. It also makes full encrypted packet replay virtually impossible and switched to Blake3 for its hashing function making it more efficient. Finally, the header and payload are separated and encrypted differently making it harder to fingerprint through data chunk length guessing.

Fundamentals

At its core ShadowSocks operates as a split proxy loosely based on SOCKS5. A local client (ss-local) runs as a SOCKS5 server on your machine and encrypts all traffic before forwarding it to a remote server (ss-remote). The remote decrypts and forwards to the actual destination. Critically, there is no SOCKS5 negotiation between the two halves - the target address is simply embedded as the first bytes of the encrypted stream. No handshake, no method negotiation, no reply. This minimizes round trips and reduces the fingerprint surface considerably.

1

Browser --SOCKS5--> ss-local --[encrypted]--> ss-remote --> target

The address is encoded using the SOCKS5 address format: a 1-byte address type (0x01 IPv4, 0x03 domain, 0x04 IPv6), the address itself, and a 2-byte big-endian port. Simple and surgical.

For AEAD ciphers, a TCP stream begins with a randomly generated salt followed by encrypted chunks. Each chunk is structured as:

1

[encrypted length (2B)][length tag (16B)][encrypted payload][payload tag (16B)]

The nonce is a 12-byte little-endian counter starting at zero, incremented after each AEAD operation. Since each chunk involves two operations (one for length, one for payload), the nonce advances by two per chunk. The maximum payload per chunk is 0x3FFF (16,383 bytes) for legacy AEAD or 0xFFFF (65,535 bytes) for SIP022. The session subkey is derived from the master key and a per-connection random salt:

Legacy AEAD:

1

2

master_key = EVP_BytesToKey(password)

session_key = HKDF_SHA1(key=master_key, salt=random_salt, info="ss-subkey")

SIP022 (2022 Edition):

1

2

master_key = base64_decode(pre_shared_key)

session_key = Blake3::derive_key(context="shadowsocks 2022 session subkey", key=master_key + salt)

For SIP022, the fixed header includes a Unix epoch timestamp that must be within 30 seconds of the server’s time, and the server maintains a salt pool to reject duplicate salts - making full encrypted packet replay virtually impossible.

Python AEAD Stream Example

A simplified Python implementation of the ShadowSocks AEAD stream encryption gives a clearer picture of how the chunked encryption works in practice. Each chunk involves two AEAD operations - one for the length prefix, one for the payload - with the nonce incrementing after each operation:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

import os, struct, hashlib, hmac

AEAD_TAG_SIZE = 16

def derive_subkey(master_key: bytes, salt: bytes, key_size: int) -> bytes:

"""HKDF-SHA1(master_key, salt, 'ss-subkey') -> session subkey."""

# HKDF extract

prk = hmac.new(salt, master_key, hashlib.sha1).digest()

# HKDF expand (single block for 16/32-byte output)

info = b"ss-subkey"

t = hmac.new(prk, info + b"\x01", hashlib.sha1).digest()

if key_size > 20:

t += hmac.new(prk, t + info + b"\x02", hashlib.sha1).digest()

return t[:key_size]

def encrypt_chunk(cipher, nonce_counter: int, payload: bytes) -> tuple[bytes, int]:

"""Encrypt one SS AEAD chunk: [enc_length + tag][enc_payload + tag]."""

# Nonce is a 12-byte little-endian counter

def nonce(n): return n.to_bytes(12, "little")

# First AEAD operation: encrypt the 2-byte payload length

len_plain = struct.pack(">H", len(payload) & 0x3FFF)

enc_len = cipher.encrypt(nonce(nonce_counter), len_plain, None)

nonce_counter += 1

# Second AEAD operation: encrypt the payload itself

enc_payload = cipher.encrypt(nonce(nonce_counter), payload, None)

nonce_counter += 1

return enc_len + enc_payload, nonce_counter

The SIP022 (2022 Edition) implementation replaces HKDF-SHA1 with Blake3’s derive-key mode, and swaps EVP_BytesToKey password derivation for a mandatory base64-encoded pre-shared key:

1

2

3

4

5

6

7

8

9

from blake3 import blake3

BLAKE3_CONTEXT = "shadowsocks 2022 session subkey"

def derive_2022_subkey(master_key: bytes, salt: bytes, key_size: int) -> bytes:

"""Blake3 key derivation for Shadowsocks 2022."""

key_material = master_key + salt

hasher = blake3(key_material, derive_key_context=BLAKE3_CONTEXT)

return hasher.digest(length=key_size)

The 2022 edition also adds a fixed header to the first encrypted chunk containing a type byte (0x00 client-to-server, 0x01 server-to-client) and an 8-byte Unix epoch timestamp. The server validates this timestamp is within 30 seconds and checks the request salt against a pool - if the salt was seen before, the connection is silently dropped. This makes replay attacks practically impossible without the attacker being able to re-derive fresh salts from the pre-shared key.

ShadowSocks configuration URLs might look like the below:

1

2

ss://[email protected]:8388#Example

ss://2022-blake3-aes-256-gcm:[email protected]:8388#SS2022

Challenges

The GFW’s detection of ShadowSocks evolved with the protocol. Legacy stream cipher versions were vulnerable to partitioning oracle attacks - the GFW could capture the first encrypted packet and systematically flip bits in the encrypted SOCKS5 address type byte. Since stream ciphers like AES-CFB are malleable (flipping bit N in ciphertext flips N in plaintext), different modifications caused the destination server to expect different address lengths. This produced observable timing differences and a handful of modified replays could confirm a ShadowSocks endpoint with high confidence.

AEAD ciphers fixed the malleability issue (any modification causes tag verification failure and silent drop), but the GFW adapted. The landmark paper “How the Great Firewall of China Detects and Blocks Fully Encrypted Traffic” (USENIX Security 2023) documented a two-stage approach: first, traffic is flagged if it fails to match any known protocol signature (no TLS 0x16 0x03 header, no HTTP method, no SSH version string, and near-uniform byte entropy). Second, the captured first message is replayed verbatim to the server - and since legacy AEAD has no replay protection, the server successfully decrypts and proxies the embedded request, confirming the endpoint.

SIP022 directly addresses replay by maintaining a per-server salt pool and embedding a validated timestamp. As of writing, no published research demonstrates active detection of SIP022 by the GFW. However, the passive entropy-based heuristic from the USENIX paper still flags the traffic as suspicious - SIP022 bytes remain uniformly random with no identifiable protocol header. The GFW simply cannot confirm it through replay anymore.

From an implementation perspective, the original EVP_BytesToKey derivation (repeated MD5 with no salt and no iterations) was effectively a non-KDF that made weak passwords trivially brute-forceable. The SIP022 replay filter also needs careful engineering - the salt pool must be bounded in memory, thread-safe under concurrent connections, and time-windowed to evict expired entries without allowing a replay gap.

ShadowSocksR

History

ShadowSocksR was forked from the original ShadowSocks by a developer known as breakwa11 shortly after clowwindy’s forced departure in August 2015. The stated motivation was straightforward - ShadowSocks traffic was too easy for the GFW to detect, and SSR would bolt on obfuscation and protocol-level enhancements to address this.

The project immediately became controversial with the Windows client initially being released as closed-source in violation of the GPL license. clowwindy himself criticized breakwa11 for extracting features from upstream ShadowSocks without contributing improvements back, while keeping SSR’s own enhancements proprietary. He also challenged SSR’s claimed security improvements, arguing that developing cryptographic algorithms behind closed doors without peer review was contrary to sound security practice.5

The project’s end came through a targeted harassment campaign in July 2017. Members of a notorious doxxing group called ESU.TV threatened to release personal information unless SSR development ceased. To prevent further harm to uninvolved third parties whose information was being exposed, breakwa11 deleted all GitHub repositories, disbanded communication groups, and permanently halted the project. Community forks live on under the shadowsocksrr organization, though the protocol is considered legacy at this point.

Fundamentals

The key architectural difference from ShadowSocks was SSR’s dual plugin system: a protocol plugin layer and an obfuscation (obfs) plugin layer, both wrapping the standard ShadowSocks encryption.

1

plaintext → protocol_plugin → SS_encrypt(stream_cipher + IV) → obfs_plugin → socket

The protocol plugins handled authentication and anti-replay protection. The most commonly used were auth_aes128_md5 and auth_aes128_sha1, which used AES-128-CBC encrypted handshakes with HMAC verification, sequential connection IDs for anti-replay, and UID-based multi-user support on a single port. The auth_chain_a variant was the most advanced, employing Encrypt-then-MAC mode and xorshift128plus-derived random padding lengths.

The obfuscation plugins handled traffic disguise. http_simple and http_post would dress up the first packet as an HTTP request with configurable Host headers. The most sophisticated was tls1.2_ticket_auth, which simulated a TLS 1.2 handshake with session ticket resumption - since a ticket is used, there are no certificate transmission steps for firewalls to validate against. It included SNI parameter support and inherent replay attack resistance.

Python Auth Plugin Example

The auth_aes128_md5 protocol plugin is the most instructive to examine. The first packet sent includes a 20-byte authentication header constructed using AES-128-CBC, followed by chunked data with HMAC integrity tags:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

def build_auth_header(key: bytes, protocol: str) -> tuple[bytes, bytes]:

"""Build auth_aes128_md5/sha1 header: uid(4) + enc_auth(12) + hmac(4)."""

auth_rand = os.urandom(4)

uid = hmac_fn(protocol, key, auth_rand)[:4]

# AES-128-CBC encrypt the authentication payload

aes_key = hashlib.md5(key + uid).digest()

aes_iv = hashlib.md5(auth_rand + key).digest()

plain = auth_rand + os.urandom(4) + b"\x00" * 8 # pad to 16 bytes

enc_auth = aes_cbc_encrypt(aes_key, aes_iv, plain)[:12]

hmac_key = key + uid

header_hmac = hmac_fn(protocol, hmac_key, uid + enc_auth)[:4]

return uid + enc_auth + header_hmac, hmac_key

Each subsequent data chunk is then wrapped with a sequential ID and HMAC for anti-replay and integrity:

1

2

3

4

5

6

def pack_chunk(data: bytes, hmac_key: bytes, send_id: int, protocol: str) -> bytes:

"""Wrap data in SSR chunk: length(2B, LE) + hmac(4B) + data."""

id_bytes = struct.pack("<I", send_id)

len_bytes = struct.pack("<H", len(data))

hmac_val = hmac_fn(protocol, hmac_key + id_bytes, len_bytes + data)[:4]

return len_bytes + hmac_val + data

The full first-packet send path illustrates the layering clearly - the SOCKS5 address is prepended to the payload, then wrapped in the protocol plugin, then encrypted with the stream cipher, then the IV is prepended:

1

2

3

4

5

6

7

8

9

10

def first_send(sock, stream_cipher, host, port, data, protocol, key):

iv = stream_cipher.init_encrypt() # Generate random IV

socks_addr = encode_socks5_address(host, port)

plaintext = socks_addr + data # Target address + payload

auth_header, hmac_key = build_auth_header(key, protocol)

proto_data = auth_header + pack_chunk(plaintext, hmac_key, send_id=1, protocol=protocol)

encrypted = stream_cipher.encrypt(proto_data)

sock.sendall(iv + encrypted) # IV is sent in the clear

While SSR served its purpose at the time, its obfuscation was fundamentally bolted onto ShadowSocks rather than designed from the ground up. Modern protocols like Trojan take the opposite approach entirely - operating within genuine TLS connections rather than simulating them. SSR also never moved beyond stream ciphers to AEAD, leaving it vulnerable to the same class of active probing attacks that plagued early ShadowSocks.6

ShadowSocksR configuration URLs might look like the below:

1

2

ssr://MTIzLjEuMi4zOjIwMTk1Om9yaWdpbjphZXMtMjU2LWNmYjpwbGFpbjpjR0Z6YzNkdmNtUT0vP3JlbWFya3M9UlhoaGJYQnNaUSZ

ssr://MTIzLjEuMi4zOjIwMTk1OmF1dGhfYWVzMTI4X21kNTphZXMtMjU2LWNmYjp0bHMxLjJfdGlja2V0X2F1dGg6Y0dGemMzZHZjbVE9Lz9vYmZzcGFyYW09WTJ4dmRXUm1iR0Z5WlM1amIyMCZyZW1hcmtzPVJYaGhiWEJzWlE

Challenges

SSR’s obfuscation turned out to be more brittle than advertised. The http_simple and http_post plugins generated HTTP headers that failed basic compliance checks - missing required headers, incorrect ordering, and the telltale transition from structured HTTP in the first packet to raw high-entropy data in all subsequent packets. Any middlebox running even rudimentary HTTP validation could flag this.

The tls1.2_ticket_auth plugin was more sophisticated but suffered from a fatal flaw: the ClientHello cipher suites, extensions, and ordering were hardcoded at development time and never updated. As real browsers evolved (Chrome iterating cipher suites roughly monthly), the SSR fingerprint became a frozen artifact that matched no real-world browser. JA3 fingerprinting - which hashes the ClientHello’s cipher suites, extensions, and elliptic curves into a short identifier - could trivially distinguish SSR’s fake TLS from genuine browser connections. The “ServerHello” was also fabricated locally with no real certificate chain, meaning any probe that attempted certificate validation would immediately identify the deception.

The dual plugin system also created implementation headaches. The combinatorial explosion of protocol plugins (auth_chain_a through auth_chain_f, auth_aes128_md5, etc.) crossed with obfuscation plugins produced dozens of configurations, not all of which were correctly implemented across the various SSR forks (Python, C-libev, CSharp). The protocol_param parsing was fragile and under-documented, leading to silent interoperability failures. Most critically, SSR never migrated beyond stream ciphers to AEAD, leaving the base encryption layer vulnerable to the same class of active probing attacks that plagued early ShadowSocks.

Hysteria

History

The first commit for this project was 09/04/2020. Self-described as a successor to the abandoned Dragonite Project and built on the QUIC Go protocol library. QUIC, or HTTP/3, was created by Google and at a high level relies on UDP to enable multiplexing and reduces latency in setting up connections by combining transport and cryptographic handshakes in one transmission. Hysteria, however, made some modifications that made it slightly more versatile at its time of conception.

Fundamentals

The fundamentals of the first Hysteria iteration are roughly as follows: a modified Draft-29 QUIC implementation designed to disregard QUIC network congestion safety mechanisms. This included ignoring packet loss (missing ACK frames) and prioritize retransmission, all for the purpose of maximising network throughput over network ‘fairness’. This modified congestion system was dubbed Brutal.

All of these were a slight and possibly accidental advantage at the time, as the salt used to derive initial secrets and the QUIC Long Header’s version field in Draft-29 differed from those of the final RFC 9000.7

QUIC-29:

- Initial Secrets Salt (TLS):

0xafbfec289993d24c9e9786f19c6111e04390a899 - Version Field:

0xff00001d=0xff000000+ 29

RFC 9000:

- Initial Secrets Salt (TLS):

0x38762cf7f55934b34d179ae6a4c80cadccbb7f0a - Version Field:

0x00000001

This meant that in the early days of QUIC adoption, network devices may have been more likely to misidentify these connections as garbage because they were looking for ‘genuine’ QUIC connections based on the RFC specifications. Naturally, to improve the protocols resiliency against fingerprinting it required more than these design choice graces. This is the key purpose X-Plus served, by adding a specific binary header to every UDP packet and encrypting the entire QUIC packet.

[16-byte Salt] + [QUIC Packet ⊕ (SHA-256(Key+Salt))]

The salt used is generated fresh for every packet transmitted by Hysteria. This salt value is then used to derive a XOR key using the pre-shared obfs configuration password. This value is distinct from the authentication password string.

This value is used in conjunction with the salt to generate a SHA-256 hash. The hash is then used to perform bitwise XOR operations on the entire QUIC packet. This helped hide the QUIC protocol in the Hysteria traffic as fingerprinting of QUIC versions improved.

Hysteria configuration URLs might look like the below:

1

2

hysteria://[email protected]:20195?type=quic&quicSecurity=none&headerType=none&security=tls&upmbps=100&downmbps=100

hy://[email protected]:20194?type=quic&quicSecurity=none&headerType=none&security=tls&obfs=xplus_obfs_secret&upmbps=100&downmbps=100

Python XPlus Obfuscation Example

Hysteria’s X-Plus would be relatively easy to implement in Python, as an example:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

class XPlusSocket:

# Wrap UDP socket and transparently obfuscate/deobfuscated packets using SHA256-based XOR encryption.

SALT_LEN = 16 # X-Plus uses 16-byte salt

def __init__(self, sock: socket.socket, password: str | bytes) -> None:

# Initialize X-Plus socket wrapper

self._sock = sock

self._key = password.encode('utf-8') if isinstance(password, str) else password

@staticmethod

def _xor_with_key(data: bytes, key: bytes) -> bytes:

# XOR data with 32-byte key using bulk int operations

dlen = len(data)

if dlen == 0:

return b""

klen = len(key)

key_stream = (key * ((dlen // klen) + 1))[:dlen]

return (int.from_bytes(data, "big") ^ int.from_bytes(key_stream, "big")).to_bytes(dlen, "big")

def sendto(self, data: bytes, addr: tuple[str, int]) -> int:

# Prepends random 16-byte salt and XOR encrypts the payload.

salt = os.urandom(self.SALT_LEN)

key = hashlib.sha256(self._key + salt).digest()

encrypted = self._xor_with_key(data, key)

packet = salt + encrypted

...

def recvfrom(self, bufsize: int) -> tuple[bytes, tuple[str, int]]:

# Extract returned 16-byte salt and XOR decrypt QUIC response

packet, addr = self._sock.recvfrom(bufsize)

if len(packet) < self.SALT_LEN:

return (b"", addr)

salt = packet[:self.SALT_LEN]

encrypted = packet[self.SALT_LEN:]

key = hashlib.sha256(self._key + salt).digest()

decrypted = self._xor_with_key(encrypted, key)

...

Challenges

The most challenging part of implementing this protocol would be creating a correctly aligned Draft-29 QUIC transport - roughly based on the aioquic library as a source for reference.

This project has since been superseded by the Hysteria2 protocol and its numerous performance and anonymity improvements. Thus there is minimal pay-off in utilizing or fingerprinting this protocol.

Hysteria2

This successor and update to the Hysteria project was first publicly released 26/05/2023 but only saw a stable release months later on 02/09/2023 with v2.0.0. Hysteria2 is a complete rewrite - not a patch or increment. The two versions are entirely incompatible.

Fundamentals

The most significant change was the approach. While the original Hysteria ran a custom binary protocol atop a modified QUIC implementation, Hysteria2 fully embraces standard RFC 9000 QUIC with TLS 1.3 and layers HTTP/3 (RFC 9114) on top. The QUIC Initial packets, version fields, TLS handshake, and ALPN negotiation all look identical to legitimate HTTP/3 traffic. A Hysteria2 server is, quite literally, a functioning HTTP/3 web server.

Authentication moved from the custom binary ClientHello/ServerHello exchange to an HTTP/3 POST request:

1

2

3

4

5

6

:method: POST

:path: /auth

:host: hysteria

Hysteria-Auth: [password]

Hysteria-CC-RX: [bandwidth in bytes/sec]

Hysteria-Padding: [random padding string]

If authentication succeeds, the server responds with HTTP status 233 (“HyOK”). If it fails - and this is the clever part - the server behaves exactly like a normal web server. It can return a 404, serve a real webpage, or proxy the request to an upstream site. An active probe connecting without Hysteria credentials receives a genuine HTTP/3 response, making the server indistinguishable from a standard CDN edge node or reverse proxy.

After authentication, TCP proxy data flows over QUIC bidirectional streams using frame type 0x401, while UDP uses the QUIC Unreliable Datagram Extension with 0-RTT session establishment. The Brutal congestion control remains, though bandwidth negotiation now occurs through HTTP headers rather than binary fields. A client can send 0 to indicate unknown bandwidth (falling back to BBR/Cubic), and the server can respond with "auto" to refuse the client’s specification entirely.

Python HTTP/3 Authentication Example

The authentication flow itself is straightforward to illustrate. After establishing a QUIC connection with TLS 1.3, the client sends an HTTP/3 POST request with custom headers. The QUIC variable-length integer encoding used throughout the protocol is worth noting - it uses the top 2 bits to signal the field length:

1

2

3

4

5

6

7

8

9

10

11

def encode_quic_varint(value: int) -> bytes:

"""Encode a QUIC variable-length integer (RFC 9000 Section 16)."""

if value < 2**6:

return struct.pack(">B", value)

elif value < 2**14:

return struct.pack(">H", 0x4000 | value)

elif value < 2**30:

return struct.pack(">I", 0x80000000 | value)

elif value < 2**62:

return struct.pack(">Q", 0xC000000000000000 | value)

raise ValueError("Value too large for varint encoding")

The authentication request is sent as standard HTTP/3 headers over the QUIC connection:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

HYSTERIA2_STATUS_OK = 233

FRAME_TYPE_TCP_REQUEST = 0x401

async def authenticate(h3_conn, quic_conn, password: str, bandwidth: int):

"""Perform Hysteria2 HTTP/3 authentication."""

stream_id = quic_conn.get_next_available_stream_id()

headers = [

(b":method", b"POST"),

(b":scheme", b"https"),

(b":authority", b"hysteria"),

(b":path", b"/auth"),

(b"hysteria-auth", password.encode("utf-8")),

(b"hysteria-cc-rx", str(bandwidth).encode()),

(b"hysteria-padding", generate_random_padding(64, 512).encode()),

]

h3_conn.send_headers(stream_id, headers, end_stream=True)

# Server responds with HTTP 233 on success, or a normal HTTP response on failure

After authentication succeeds, opening a TCP tunnel uses QUIC bidirectional streams with the Hysteria2 frame format. The destination address is encoded as a plain host:port string with a QUIC varint length prefix:

1

2

3

4

5

6

7

8

9

10

11

12

13

async def open_tcp_tunnel(quic_conn, host: str, port: int):

"""Open a TCP tunnel stream to the destination."""

stream_id = quic_conn.get_next_available_stream_id()

address = f"{host}:{port}".encode("utf-8")

frame = (

encode_quic_varint(FRAME_TYPE_TCP_REQUEST) + # 0x401

encode_quic_varint(len(address)) + # Address length

address + # "example.com:443"

encode_quic_varint(0) # No padding

)

quic_conn.send_stream_data(stream_id, frame)

# Server responds: Status(1B) + MsgLen(varint) + Msg + PadLen(varint) + Pad

# Status 0 = OK, 1 = Error

Salamander Obfuscation

The obfuscation layer was also redesigned. X-Plus was replaced by Salamander, which swaps SHA-256 for BLAKE2b-256 and reduces the salt from 16 bytes to 8:

[8-byte Salt] + [QUIC Packet ⊕ (BLAKE2b-256(Key+Salt))]

The implementation is nearly identical in structure to the X-Plus example above, just with the hash and salt swapped:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

class SalamanderSocket:

SALT_LEN = 8 # Reduced from X-Plus' 16

KEY_LEN = 32 # BLAKE2b-256 output

def __init__(self, sock: socket.socket, password: str | bytes) -> None:

self._sock = sock

self._psk = password.encode('utf-8') if isinstance(password, str) else password

@staticmethod

def _xor_with_key(data: bytes, key: bytes) -> bytes:

"""XOR data with a repeating 32-byte key using bulk int operations."""

dlen = len(data)

if dlen == 0:

return b""

key_stream = (key * ((dlen // 32) + 1))[:dlen]

return (int.from_bytes(data, "big") ^ int.from_bytes(key_stream, "big")).to_bytes(dlen, "big")

def sendto(self, data: bytes, addr: tuple[str, int]) -> int:

salt = os.urandom(self.SALT_LEN)

key = hashlib.blake2b(self._psk + salt, digest_size=self.KEY_LEN).digest()

encrypted = self._xor_with_key(data, key)

self._sock.sendto(salt + encrypted, addr)

return len(data)

def recvfrom(self, bufsize: int) -> tuple[bytes, tuple[str, int]]:

packet, addr = self._sock.recvfrom(bufsize)

if len(packet) <= self.SALT_LEN:

return (b"", addr)

salt, encrypted = packet[:self.SALT_LEN], packet[self.SALT_LEN:]

key = hashlib.blake2b(self._psk + salt, digest_size=self.KEY_LEN).digest()

return (self._xor_with_key(encrypted, key), addr)

It is worth noting that the Hysteria developers explicitly discourage using Salamander unless necessary. Enabling it makes the server incompatible with standard QUIC connections, meaning it no longer functions as a valid HTTP/3 server. Fully randomized traffic patterns can actually be easier to detect than traffic that looks like legitimate HTTP/3 - which somewhat defeats the purpose of the masquerade approach. Use it only when your network specifically blocks QUIC/HTTP/3 but not UDP in general.8

Hysteria2 configuration URLs might look like the below:

1

2

hy2://[email protected]:20195?sni=example.com&insecure=0

hy2://[email protected]:20195?obfs=salamander&obfs-password=obfs_secret&sni=example.com

Challenges

Hysteria2’s primary threat is not protocol-specific, but the GFW’s approach to QUIC as a whole. Starting around late 2021, the GFW began throttling QUIC traffic to foreign servers by identifying QUIC initial packets using their fixed structural features (Form Bit = 1, Fixed Bit = 1, and the cleartext version field). Rather than RST injection (as with TCP), the GFW drops subsequent UDP packets to the same 5-tuple after flagging the flow. This affects all QUIC-based protocols equally - Hysteria2, TUIC, Juicity, and MASQUE.

Even if the QUIC blocking is circumvented, the Brutal congestion control creates a distinctive fingerprint. Standard congestion algorithms (BBR, Cubic) create identifiable bandwidth patterns - ramp up, detect congestion, back off, repeat. Brutal maintains a flat, constant send rate regardless of packet loss. ML-based traffic classifiers can flag this anomalous pattern from legitimate HTTP/3 flows. Setting the bandwidth to 0 (falling back to standard BBR) eliminates this fingerprint but kinda defeats the purpose of choosing Hysteria in the first place.

Port hopping - where the client cycles through destination UDP ports on a timer - helps evade per-flow blocking but creates its own fingerprint: many short-lived UDP flows from one source IP to one destination IP across sequential ports with consistent bandwidth. If the GFW blocks by destination IP rather than by individual flows, port hopping provides no benefit at all.

Implementing Hysteria2 from scratch requires a full RFC 9000 QUIC stack with TLS 1.3 and HTTP/3 support, which is a substantial engineering effort. The reference implementation builds on quic-go, but alternative implementations (e.g., in Python using aioquic) must handle connection migration, 0-RTT resumption, stream multiplexing, and proper QUIC congestion control - all while layering the Hysteria2 authentication and framing protocol on top.

VMess

History

VMess is the original encrypted protocol of the V2Ray project (Project V), created by a developer under the pseudonym Victoria Raymond and first released on 18/09/2015. V2Ray was explicitly developed as a more versatile, modular successor to ShadowSocks - a form of protest against the governmental suppression that forced clowwindy out. Victoria Raymond mysteriously disappeared in February 2019, with all her online accounts going silent. The community forked the project to V2Fly to maintain continuity.

Fundamentals

VMess is a stateless, TCP-based encrypted protocol that requires no handshake. Authentication is based on a UUID shared between client and server. A request consists of three parts:

Authentication (16 bytes): An HMAC-MD5 hash of the user’s UUID and a UTC timestamp (with +/- 30-second tolerance). The server uses this to identify the connecting user and validates the timestamp to prevent replay outside the window.

Instruction Section: Contains the encryption IV (16B), encryption key (16B), response authentication value (1B), option flags, cipher selection, command type (

0x01TCP or0x02UDP), destination port, destination address, and an FNV1a checksum for integrity.Data Section: The proxied payload, optionally chunked with length obfuscation and padding.

The supported body ciphers are AES-128-GCM, ChaCha20-Poly1305, AES-128-CFB (legacy), and none/zero. The original authentication used AES-128-CFB encrypted headers with MD5, but this was replaced with AEAD headers using AES-128-GCM after critical vulnerabilities were discovered in May 2020.

Those vulnerabilities were rather damning. Researchers demonstrated that the legacy MD5 authentication provided no actual authentication of the encrypted header, allowing attackers to manipulate padding length fields across multiple connections and infer VMess usage through timing analysis. The authentication credential had a maximum expiration of 120 seconds, within which captured credentials could be replayed successfully. V2Ray clients also generated distinctive TLS fingerprints through hardcoded cipher suites, enabling identification with near-perfect accuracy. A researcher noted blocking could be accomplished with “one line of iptables rules”.9

Python AEAD Header Sealing Example

The modern AEAD header sealing uses a recursive HMAC-SHA256 KDF with dedicated salt constants. The CmdKey is itself derived from the UUID - so the server needs nothing more than the shared UUID to decrypt the header:

1

2

3

4

5

6

7

8

9

10

def cmd_key(uuid_bytes: bytes) -> bytes:

"""CmdKey = MD5(UUID + magic_constant). This is the root key for the AEAD header."""

return hashlib.md5(uuid_bytes + b"c48619fe-8f02-49e0-b9e9-edf763e17e21").digest()

def fnv1a(data: bytes) -> int:

"""FNV-1a 32-bit hash used for instruction section integrity."""

h = 0x811C9DC5

for b in data:

h = ((h ^ b) * 0x01000193) & 0xFFFFFFFF

return h

The AEAD header is sealed in three parts. First, an AuthID is created by AES-encrypting a timestamp with random padding and a CRC32 checksum - this lets the server identify which user is connecting and validate the timestamp window. Then the instruction section length and payload are each encrypted with AES-128-GCM using KDF-derived keys:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

# KDF salt constants define the key derivation paths

KDFSaltConstVMessAEADKDF = b"VMess AEAD KDF"

KDFSaltConstAuthIDEncryptionKey = b"AES Auth ID Encryption"

KDFSaltConstVMessHeaderPayloadAEADKey = b"VMess Header AEAD Key"

KDFSaltConstVMessHeaderPayloadAEADIV = b"VMess Header AEAD Nonce"

def seal_vmess_aead_header(key: bytes, instruction: bytes) -> bytes:

"""SealVMessAEADHeader: authID(16) + encLen(2+16) + nonce(8) + encPayload(N+16)."""

auth_id = create_auth_id(key) # AES-ECB encrypt [timestamp(8) + random(4) + crc32(4)]

nonce = os.urandom(8) # Connection nonce for key uniqueness

# Encrypt instruction length with AES-128-GCM (AAD = auth_id)

len_key = kdf16(key, [b"VMess Header AEAD Key_Length", auth_id, nonce])

len_nonce = kdf12(key, [b"VMess Header AEAD Nonce_Length", auth_id, nonce])

enc_len = aes_gcm_seal(len_key, len_nonce, struct.pack(">H", len(instruction)), aad=auth_id)

# Encrypt instruction payload with AES-128-GCM (AAD = auth_id)

pay_key = kdf16(key, [b"VMess Header AEAD Key", auth_id, nonce])

pay_nonce = kdf12(key, [b"VMess Header AEAD Nonce", auth_id, nonce])

enc_pay = aes_gcm_seal(pay_key, pay_nonce, instruction, aad=auth_id)

return auth_id + enc_len + nonce + enc_pay

The body encryption uses a different scheme entirely. For AES-128-GCM body security, Xray employs a Shake128 XOF (extendable output function) to generate masks for the 2-byte chunk length fields - a form of length obfuscation that prevents statistical analysis of chunk sizes. Each chunk can also include random padding (0-63 bytes) derived from the same Shake128 stream:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

class ShakeSizeMask:

"""Mask generator for VMess chunk length obfuscation using SHAKE-128."""

def __init__(self, nonce: bytes) -> None:

self._hasher = hashlib.shake_128(nonce)

self._stream = self._hasher.digest(8192)

self._offset = 0

def next_mask(self) -> int:

"""Returns a 2-byte mask to XOR with the chunk length."""

val = struct.unpack_from(">H", self._stream, self._offset)[0]

self._offset += 2

return val

def next_padding_len(self) -> int:

"""Returns padding length (0-63 bytes) for this chunk."""

return self.next_mask() % 64

The VMess nonce construction is also unusual - rather than a simple incrementing counter, it combines a 2-byte big-endian counter with the original 12-byte IV:

1

2

3

4

5

6

7

8

9

10

11

12

class VMessNonce:

"""VMess nonce: counter_BE2 || IV[2:12]. Not a simple incrementing counter."""

def __init__(self, iv: bytes) -> None:

self._buf = bytearray(12)

self._buf[2:12] = iv[2:12] # Fixed suffix from session IV

self._count = 0

def next(self) -> bytes:

"""Return next nonce and advance counter."""

struct.pack_into(">H", self._buf, 0, self._count & 0xFFFF)

self._count += 1

return bytes(self._buf)

Putting it all together, the full AEAD chunk send path illustrates how a single sendall() becomes an obfuscated stream of variable-length chunks with masked sizes and random padding:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

def vmess_send_chunk(cipher, nonce, mask_gen, payload: bytes) -> bytes:

"""Encrypt one VMess AEAD body chunk with size masking and padding.

Wire format: [masked_size (2B)] [AEAD(payload + padding)]

The 2-byte size is XOR'd with a SHAKE-128 mask, making each

chunk header appear random to a passive observer."""

padding_len = mask_gen.next_padding_len()

padding = os.urandom(padding_len)

plaintext = payload + padding

ciphertext = cipher.encrypt(nonce.next(), plaintext, None) # payload + pad + 16B tag

# Size = tag_len + payload_len + padding_len, then XOR with mask

raw_size = len(ciphertext) + padding_len

masked_size = struct.pack(">H", raw_size ^ mask_gen.next_mask())

return masked_size + ciphertext

VMess also supports a flexible transport layer - TCP, WebSocket, HTTP/2, mKCP (UDP with header obfuscation to mimic SRTP/uTP/DTLS/WireGuard), QUIC, and gRPC. This modularity was a major advantage over ShadowSocks at the time and allowed VMess traffic to be relayed through CDNs via WebSocket.

VMess configuration URLs might look like the below:

1

vmess://eyJ2IjoiMiIsInBzIjoiRXhhbXBsZSIsImFkZCI6IjEyMy4xLjIuMyIsInBvcnQiOiI0NDMiLCJpZCI6ImE2MjMzMGQxLThmNDMtNDhhZS1iOTAwLWMxYjEyMzQ1Njc4OSIsImFpZCI6IjAiLCJzY3kiOiJhZXMtMTI4LWdjbSIsIm5ldCI6IndzIiwidHlwZSI6Im5vbmUiLCJob3N0IjoiZXhhbXBsZS5jb20iLCJwYXRoIjoiL3BhdGgiLCJ0bHMiOiJ0bHMiLCJzbmkiOiJleGFtcGxlLmNvbSJ9

Challenges

VMess has the most documented detection history for any protocol in this list.

The legacy AES-128-CFB header was completely inadequate. The GFW was able to exploit CFB mode’s malleability to craft a padding length oracle. This is an attack that flips specific bits in the encrypted P_Len nibble and replays modified packets to test for different server timing depending on whether the FNV1a checksum aligned correctly at the modified padding length. Roughly 16 crafted probes could confirm a VMess endpoint with a very high degree of certainty.

The authentication credential, HMAC-MD5(UUID, timestamp), had an a roughly 120-second replay window (timestamps rounded to 30-second intervals). Within that window, any captured authentication bytes could be replayed verbatim. The server’s processing time was also measurably different for valid vs invalid auth - about 1-2ms, small but sufficient for detection over the network. The server attempted header decryption only on valid auth, creating a timing side channel.

AEAD header improvements (mandatory since 2022 - alterId=0) fixed the more direct exploits - the GCM tag prevents modification and the AuthID now uses AES-ECB encrypted timestamps with CRC32 validation. However traffic still triggers the USENIX 2023 fully-encrypted heuristic (uniformly random bytes, no protocol signature), also the first packet has a recognizable structure: always exactly 16B AuthID + 18B encrypted length + 8B nonce + NB encrypted instruction, producing predictable size patterns.

However the Golang crypto/tls fingerprinting issue was perhaps the largest embarrasment and operational failure. Go’s TLS implementation produces a ServerHello with very distinctive cipher suite preferences and extension ordering that matches no browsers or web servers. The JA3S hash for V2Ray’s TLS was essentially a unique identifier - researchers noted blocking could be accomplished with “one line of iptables rules”. This spurred the adoption of uTLS for client-side fingerprint mimicry, which eventually became the foundation of REALITY.

On the implementation side, the recursive HMAC-SHA256 KDF nesting order can be error-prone. Getting the path kdf(key, [s1, s2, s3]) wrong produces silent failures. Additionally, the Shake128 mask state must be kept perfectly synchronized between client and server - a singular desync renders the entire stream unreadable with recovery possible.

Trojan

History

Trojan was developed by GreaterFire and the trojan-gfw organization and early releases date to mid-2018. The project was built around the insight that: appearing random is itself suspicious. If censors determined that that roughly 50% of traffic is HTTP, ~40% is TLS, and 10% is unrecognizable high-entropy communications, then the last 10% becomes a priority investigation target. Rather than creating another novel encrypted protocol, Trojan chose to hide inside the most common traffic on the internet - HTTPS.

The design sentiment criticized ShadowSocks’s approach of using custom stream ciphers, arguing that leveraging proven TLS was more secure, and more practical. The project tagline echoes this: “imitating the most common protocol, to an extent that it behaves identically, could help bypass the Great Firewall permanently.”10

Fundamentals

The protocol is deceptively simple. The client connects to the server and performs a genuine TLS handshake using a valid CA-signed certificate (typically from Let’s Encrypt). This is not mimicry - it is HTTPS. After the TLS tunnel is established, the client sends its authentication and proxy request.

The packet structure after the TLS handshake:

1

[SHA224(password) hex (56B)] [CRLF] [CMD (1B)] [ATYP (1B)] [DST.ADDR] [DST.PORT (2B)] [CRLF] [Payload]

The password is hashed with SHA224 and converted to a 56-byte hexadecimal string. The hex encoding is deliberate to avoid accidentally including 0x0D0A (CRLF) in the hash output, which would break the protocol’s field delimiter. The command byte is 0x01 for TCP CONNECT or 0x03 for UDP ASSOCIATE, followed by the same SOCKS5-style address format used by ShadowSocks.

Python Connection Flow Example

The entire Trojan client can be reduced to a surprisingly small amount of code. The authentication, request header, and first payload can all be sent in a single write after the TLS handshake completes:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

import hashlib, socket, ssl

COMMAND_TCP = 0x01

COMMAND_UDP = 0x03

def trojan_key(password: str) -> bytes:

"""Key = SHA224(password) hex-encoded, 56 bytes.

Hex encoding avoids CRLF bytes (0x0D0A) in the hash output."""

return hashlib.sha224(password.encode("utf-8")).hexdigest().encode("ascii")

def encode_socks5_addr(host: str, port: int) -> bytes:

"""SOCKS5 address: type(1) + addr(variable) + port(2)."""

try:

import ipaddress

addr = ipaddress.ip_address(host)

if addr.version == 4:

return b"\x01" + addr.packed + port.to_bytes(2, "big")

return b"\x04" + addr.packed + port.to_bytes(2, "big")

except ValueError:

encoded = host.encode("utf-8")

return b"\x03" + bytes([len(encoded)]) + encoded + port.to_bytes(2, "big")

def trojan_header(password: str, host: str, port: int) -> bytes:

"""Build complete Trojan request header."""

key = trojan_key(password)

cmd = bytes([COMMAND_TCP])

addr = encode_socks5_addr(host, port)

return key + b"\r\n" + cmd + addr + b"\r\n"

Connecting to a Trojan server and opening a tunnel is then just a TLS socket with one sendall:

1

2

3

4

5

6

7

8

9

10

11

def trojan_connect(server: str, server_port: int, password: str,

dest_host: str, dest_port: int, sni: str = None):

"""Establish a Trojan tunnel through a TLS connection."""

sock = socket.create_connection((server, server_port))

ctx = ssl.create_default_context()

tls_sock = ctx.wrap_socket(sock, server_hostname=sni or server)

# Send header + first payload in one write (reduces round trips)

header = trojan_header(password, dest_host, dest_port)

tls_sock.sendall(header)

return tls_sock # Now just a normal bidirectional stream

That is pretty much the entire protocol. The server reads the first 56 bytes, checks if they match the hex SHA224 of any configured password, and if not - redirects the connection to a preset endpoint, typically a real web server on 127.0.0.1:80. The probe receives a genuine webpage, making the server indistinguishable from other HTTPS web servers. There are no distinctive error responses, no connection drop patterns, so very little in the way of fingerprinting.

For WebSocket transports, there is one additional subtlety worth noting. The Trojan header should be coalesced with the first payload into a single WebSocket frame, rather than sent as a separate frame. This avoids a distinctive pattern where the server sees a small frame (just the header) followed by a larger one (the actual data):

1

2

3

4

5

6

7

8

9

10

11

12

class TrojanWsCoalesceStream:

"""Wraps WebSocket: first sendall() prepends [header][data] in one frame."""

def __init__(self, ws_stream, header: bytes) -> None:

self._stream = ws_stream

self._header = header

self._sent = False

def sendall(self, data: bytes) -> None:

if not self._sent:

self._sent = True

data = self._header + data # Coalesce header with first payload

self._stream.sendall(data)

This approach was later extended by Trojan-Go, a complete Go reimplementation that added multiplexing (carrying multiple TCP connections over a single TLS tunnel), WebSocket support for CDN relay, and even a secondary ShadowSocks AEAD encryption layer for protecting WebSocket traffic from untrusted CDN intermediaries.

Trojan configuration URLs might look like the below:

1

2

trojan://[email protected]:443?security=tls&sni=example.com&type=tcp#Example

trojan://[email protected]:443?security=tls&type=ws&host=example.com&path=/path#WS-Example

Challenges

No currently published research demonstrates a fully reliable active detection method for Trojan. Its strength is fundamental - the TLS handshake is genuine, the certificate is CA-signed, and failed authentication results in a real webpage being served. There is no distinctive error response, no timing oracle, nothing to fingerprint at the protocol level.

A workable detection vector is TLS-in-TLS fingerprinting - this technique applies to all TLS-based proxies. When clients visit https://example.com through a Trojan proxy, the inner TLS handshake (client to example.com) occurs inside the outer TLS tunnel (client to proxy). Even though the outer TLS encrypts content, the sizes of the inner handshake records are still visible as outer TLS Application Data record lengths. The pattern - a ~300B record (inner ClientHello), a ~3000B record (inner ServerHello + certificates), then a ~150B record (inner KeyExchange) - does not occur in normal HTTPS traffic and can be identified by Machine Learning classifiers with a high degree of accuracy. This is precisely what XTLS-Vision was designed to address with its padding strategy.

Operationally, Trojan requires a valid domain with a CA-signed certificate, which creates a paper trail. The GFW has been reported to monitor Certificate Transparency logs for newly issued certificates on suspicious IP ranges. The cover website must be plausible - a default nginx page on a VPS in a datacenter commonly used for proxy servers is as good as a neon sign. And the Trojan header must be coalesced with the first data payload into a single TCP segment; sending them as separate write() calls produces a tiny first segment (the 56-byte hash + command + address) followed by a larger data segment, creating a recognizable size pattern.

VLESS

History

VLESS was created by a developer known as RPRX as part of Project X, launched on 23/11/2020 with Xray-core v1.0.0 released two days later as a fork of V2Fly. The split originated from a license dispute over XTLS - RPRX had contributed XTLS to V2Ray-core, but a file carried an “All rights reserved” header rather than the BSD license used elsewhere, causing conflicts with Linux distribution packagers. Rather than resolve the licensing conflict, RPRX created Project X as a V2Ray superset.

Fundamentals

VLESS was created from a simple observation: when VMess runs over TLS, every byte is encrypted by VMess and then encrypted again by TLS. This both doubles CPU work for zero additional security benefit and creates a potentially recognizable pattern. VLESS strips out the built-in encryption entirely and delegates security to the underlying TLS layer.

No timestamp synchronization is needed, no double encryption overhead. Authentication uses UUID validation, with support for arbitrary strings mapped to UUIDs via the VLESS UUID Mapping Standard (UUIDv5 with nil namespace).

Python VLESS Header Example

The VLESS request header is surprisingly minimal compared to VMess. No timestamp synchronization, no double encryption - just a version byte, UUID, optional add-ons, and the destination:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

import uuid as uuid_module

VLESS_VERSION = 0

CMD_TCP = 1

CMD_UDP = 2

def uuid_to_bytes(uuid_str: str) -> bytes:

"""Parse UUID string to 16 bytes. Supports standard UUIDs and arbitrary strings

(mapped via UUIDv5 with nil namespace, matching Xray-core)."""

s = uuid_str.strip()

hex_only = s.replace("-", "")

if len(hex_only) == 32 and all(c in "0123456789abcdefABCDEF" for c in hex_only):

return bytes.fromhex(hex_only)

try:

return uuid_module.UUID(s).bytes

except ValueError:

# Xray fallback: UUIDv5 with nil namespace for arbitrary password strings

return uuid_module.uuid5(uuid_module.UUID(int=0), s).bytes

def encode_vless_addons(flow: str | None) -> bytes:

"""Encode VLESS header addons as a minimal protobuf message.

When flow=xtls-rprx-vision, sends the flow value as a protobuf string field."""

if not flow or flow.strip() != "xtls-rprx-vision":

return b"\x00" # No addons: 1 byte = 0

flow_bytes = b"xtls-rprx-vision"

# Protobuf: field 1 (tag 0x0A = field_number=1, wire_type=2), varint length, then string

addons = bytes([0x0A, len(flow_bytes)]) + flow_bytes

return bytes([len(addons)]) + addons

def vless_request(uuid_str: str, host: str, port: int, flow: str = None) -> bytes:

"""Build a VLESS request header.

Format: version(1) + UUID(16) + addons_len(1) + [addons] + cmd(1) + port(2,BE) + addr_type(1) + addr"""

uuid_bytes = uuid_to_bytes(uuid_str)

addons = encode_vless_addons(flow)

cmd = bytes([CMD_TCP])

port_bytes = port.to_bytes(2, "big")

addr = encode_socks5_addr(host, port) # Reuse SOCKS5 address encoding

return bytes([VLESS_VERSION]) + uuid_bytes + addons + cmd + port_bytes + addr[1:] # Skip ATYP duplicate

XTLS Vision - Solving Double Encryption

The real innovation is XTLS. RPRX found that when users visit an HTTPS website through TLS proxies, the data is encrypted twice - once by the inner TLS connection to the destination, and then again by the outer TLS connection made to the proxy. XTLS reads the inner TLS handshake as it passes through, and once the inner handshake completes and encrypted application data begins flowing, it stops encrypting at the outer layer and forwards the already-encrypted data. On Linux, this also enables kernel-level Splice where the system kernel forwards TCP data directly without it passing through userspace memory at all.

The way Vision detects the transition from handshake to application data is by inspecting the TLS content type byte. During a handshake, TLS records carry content type 0x16 (Handshake). Once the handshake completes and encrypted application data begins flowing, the content type switches to 0x17 (Application Data). Vision monitors for this transition and adds random-length padding to the inner handshake records to prevent packet sizes from creating a recognizable TLS-in-TLS fingerprint:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

# TLS record content types

TLS_HANDSHAKE = 0x16

TLS_APPLICATION_DATA = 0x17

TLS_CHANGE_CIPHER = 0x14

def detect_tls_application_data(data: bytes) -> bool:

"""Check if this TLS record contains application data (signals handshake is complete).

Once detected, the outer encryption can be bypassed - the inner TLS is already encrypted."""

if len(data) < 5:

return False

content_type = data[0]

# TLS version check: major=0x03 (TLS), minor=0x01-0x03 (TLS 1.0-1.2) or 0x04 (TLS 1.3)

if data[1] != 0x03 or data[2] not in (0x01, 0x02, 0x03, 0x04):

return False

return content_type == TLS_APPLICATION_DATA

The XTLS-Vision flow (released October 2022) addresses the detection vector where packet length distributions and timing intervals of the inner TLS handshake creates recognizable patterns. Vision wraps outbound data in “Vision blocks” that include random-length padding, making the inner TLS handshake records indistinguishable from application data by size:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

def vision_pad(data: bytes, is_first: bool, uuid_bytes: bytes) -> bytes:

"""Wrap data in a Vision block with adaptive padding.

Block format: [UUID (16B, first only)] [cmd (1B)] [content_len (2B BE)]

[padding_len (2B BE)] [content] [random padding]

Padding strategy:

- Small packets (< 900 bytes): pad to 900-1400 bytes total

(mimics TLS record sizes to defeat length-based fingerprinting)

- Large packets (>= 900 bytes): light padding (0-255 bytes)

"""

cmd = 0x01 # DATA

if len(data) < 900:

pad_len = 900 - len(data) + int.from_bytes(os.urandom(2), "big") % 500

else:

pad_len = int.from_bytes(os.urandom(1), "big")

header = b""

if is_first:

header = uuid_bytes # 16 bytes, only on first block

header += struct.pack(">BHH", cmd, len(data), pad_len)

return header + data + os.urandom(pad_len)

Once the inner TLS handshake completes and application data begins flowing, the server can send a cmd=0x02 (Direct) signal. At this point the server stops encrypting at the outer TLS layer entirely and begins forwarding already-encrypted inner TLS data directly on the raw TCP socket. On the client side, this requires reading from a duplicated raw file descriptor while continuing to write through the SSL socket - a neat trick that enables kernel-level Splice on Linux where the system copies TCP data between sockets without it ever touching userspace memory.

REALITY - Borrowing Someone Else’s Identity

Then there’s REALITY (released June 2023), which eliminates another remaining detection vector: server TLS fingerprints. Traditional TLS proxies require a legitimate domain and certificate, and the server’s Go crypto/tls implementation has distinctive fingerprints in the ServerHello that differ from popular web servers. REALITY addresses this by not requiring a domain or certificate at all. Instead, the server is configured to point to legitimate external websites (e.g., www.microsoft.com) and presents the genuine ServerHello from that target during the handshake. REALITY clients can distinguish between the proxy certificate and an authentic intercepted certificate using an X25519 keypair and ShortId values.11

A client REALITY connection performs a custom TLS 1.3 handshake, that crafts a ClientHello to impersonate a specific browser’s fingerprint (Chrome, Firefox, Safari, etc.). The server’s public key and a ShortId are used to authenticate the connection out-of-band:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

def reality_connect(sock, sni: str, server_pub: bytes, short_id: bytes,

fingerprint: str = "chrome"):

"""Establish a REALITY TLS connection.

1. Generate ephemeral X25519 keypair

2. Send ClientHello mimicking the specified browser fingerprint

3. Receive ServerHello (relayed from the real target site)

4. Derive shared secret from server_pub + client_private via X25519

5. Verify short_id matches to authenticate the proxy (not a MITM)

6. Complete TLS 1.3 handshake with the derived keys

"""

from cryptography.hazmat.primitives.asymmetric.x25519 import X25519PrivateKey

client_private = X25519PrivateKey.generate()

client_public = client_private.public_key()

shared_secret = client_private.exchange(server_pub)

# ... TLS 1.3 handshake with browser-mimicking ClientHello ...

The recommended production configuration is VLESS + TCP + XTLS-Vision + REALITY + uTLS, where each component addresses a different detection vector - REALITY for server TLS fingerprint, Vision for TLS-in-TLS patterns, and uTLS for client TLS fingerprint impersonation (mimicking Chrome, Firefox, etc.). Some implementations support upwards of 10 browser fingerprints for REALITY including Chrome, Firefox, Safari, Edge, and even a post-quantum ML-KEM 768 hybrid key exchange variant.

VLESS configuration URLs might look like the below:

1

2

vless://[email protected]:443?encryption=none&security=tls&type=tcp&flow=xtls-rprx-vision&sni=example.com#TLS-Vision

vless://[email protected]:443?encryption=none&security=reality&type=tcp&flow=xtls-rprx-vision&pbk=PublicKeyBase64&sid=abcd1234&sni=www.microsoft.com&fp=chrome#REALITY

Challenges

The original XTLS (xtls-rprx-origin / xtls-rprx-direct) had an observable splice transition. When the inner TLS handshake completed, the outer encryption abruptly stopped - from the network perspective, TLS Application Data records were suddenly replaced by raw forwarded bytes with completely different characteristics. An inline device could detect these behavioural changes trivially.

Vision addressed the TLS-in-TLS size fingerprinting by adding random-length padding to inner handshake packets (small records padded to 900-1400 bytes), however this approach has theoretical limits. TLS 1.3 complicates detection, it uses content type 0x17 (Application Data) for all post-handshake records, this includes encrypted handshake messages where the real content type is in the encrypted payload. Vision must correctly parse inner TLS record boundaries from decrypted tunnel data and identify the genuine handshake-to-application transition, this creates edge cases with non-TLS traffic or unusual TLS implementations.

REALITY eliminates the domain and certificate requirement but introduces its own attack surface. An active prober can connect directly to the configured dest server (e.g., www.microsoft.com) and compare response timing - the REALITY server adds latency from proxying the handshake, creating a measurable RTT discrepancy. IP geolocation mismatches (a Tokyo VPS claiming to be www.microsoft.com) are also a weak signal. The X25519 public key distributed in share links also cannot be rotated without updating every client, and if leaked, allows anyone to authenticate. Additionally, uTLS fingerprints must be kept current as browsers update their TLS stacks semi-regularly - impersonating Chrome 110 when the real Chrome is at 130+ becomes a potential detection method. The utls library tracks these changes, but there is inherent lag behind current versioning.

TUIC

History

TUIC was created by @EAimTY with the protocol organization established in December 2021. Originally written in Rust using the quinn QUIC library, it saw five major protocol versions before development was discontinued with v5. Like Hysteria, it is QUIC-based - but where Hysteria takes a “violent” approach with its Brutal congestion control, TUIC takes a milder stance, preferring to work with QUIC’s native multiplexing and low-latency features rather than overriding its congestion mechanisms.

Fundamentals

The standout feature of TUIC v5 is its authentication mechanism. Rather than sending a password in a custom header (like Hysteria2) or embedding it in the first packet (like Trojan), TUIC uses the TLS Keying Material Exporter (RFC 5705) to bind authentication to the TLS session itself.

The TLS exporter is a function built into TLS 1.3 that lets applications derive keying material from an established session’s master secret. The crucial property is that the exported material is cryptographically bound to the specific TLS session - two different TLS sessions will produce completely different exports even with the same inputs. TUIC exploits this by using the client’s UUID as the export label and the raw password bytes as the context:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

def derive_tuic_token(uuid_bytes: bytes, password: str, tls_exporter) -> bytes:

"""Derive TUIC v5 authentication token from TLS keying material.

The TLS exporter (RFC 5705) binds the token to this specific session.

Even if an attacker captures the token, it cannot be replayed on a

different connection because the underlying TLS master secret differs.

Label: client UUID (16 bytes)

Context: raw UTF-8 password bytes

Output: 32-byte token

"""

label = uuid_bytes

context = password.encode("utf-8")

return tls_exporter(label, context, length=32)

The token is then sent on a QUIC unidirectional stream alongside the protocol version byte (0x05 for TUIC v5), command byte, and the UUID itself. The server independently derives the same token using its copy of the password and the same TLS session - if the tokens match, the client is authenticated.

After authentication, TCP tunneling is straightforward - the client opens a QUIC bidirectional stream with a connect header containing the destination address. UDP proxying is more interesting: TUIC v5 supports multiple relay modes including native QUIC datagrams and QUIC streams, with the client specifying the preferred mode during authentication. 0-RTT is supported for both, meaning the client can begin sending proxy data in the very first QUIC flight - before the handshake even completes.

TUIC supports configurable congestion control (BBR, Cubic, NewReno) rather than imposing its own. This makes it less aggressive than Hysteria but also more “network-fair” - it won’t monopolize bandwidth at the expense of other connections on the same link.

TUIC configuration URLs might look like the below:

1

tuic://uuid-here:[email protected]:443?alpn=h3&sni=example.com&congestion_control=bbr#Example

Challenges

TUIC faces the same QUIC throttling as Hysteria2, but with an additional protocol-specific concern: unlike Hysteria2, TUIC does not layer HTTP/3 on top of QUIC. It uses raw QUIC streams with its own binary protocol. A DPI system inspecting the first bytes of QUIC streams would see TUIC’s custom framing (0x05 version byte, command bytes) rather than HTTP/3 HEADERS frames. This makes TUIC more distinguishable from legitimate HTTP/3 traffic than Hysteria2, which at least uses standard HTTP/3 framing for authentication.

The auth-on-unidirectional-stream pattern also creates a distinctive signature: QUIC handshake completes, then a small unidirectional stream opens carrying exactly 51 bytes (1B version + 1B command + 16B UUID + 1B reserved + 32B token). A legitimate HTTP/3 server would not exhibit this exact pattern. The TLS exporter itself is cryptographically sound (session-bound, non-replayable), but the protocol’s wire-level behavior still differs from genuine HTTP/3.

Implementing the TLS exporter correctly requires that the underlying QUIC library expose export_keying_material(), which not all libraries do. The Rust quinn library’s API also changed between versions during TUIC’s active development, contributing to the maintenance burden that ultimately led to the project’s discontinuation.

Juicity

History

Juicity was created by mzz2017 (the developer behind the dae eBPF-based transparent proxy) and released in mid-2023 as a spiritual successor to TUIC after EAimTY discontinued active development.12 Written in Go using the quic-go library (as opposed to TUIC’s Rust quinn), it aims to provide a cleaner, simpler protocol while preserving TUIC’s core concept of cryptographically-bound authentication over QUIC.

Fundamentals

Juicity shares the same foundational approach as TUIC - QUIC transport with TLS exporter authentication - but strips away complexity. The ALPN must be h3 with no fallback, the version byte is 0x00 (versus TUIC’s 0x05), and authentication is “silent” - the client sends credentials and proceeds without waiting for a response. If the server rejects the auth, it terminates the QUIC connection.

The authentication flow uses the same TLS Keying Material Exporter as TUIC but with a subtly different wire format. The token is sent on a unidirectional QUIC stream:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

# Juicity auth wire format on a unidirectional QUIC stream:

# [version: 0x00 (1B)] [cmd: 0x00 (1B)] [UUID (16B)] [token (32B)]

def juicity_authenticate(quic_conn, uuid_bytes: bytes, password: str):

"""Send Juicity authentication on a unidirectional stream.

Token derivation is identical to TUIC:

export_keying_material(label=uuid, context=password, length=32)

Key difference: Juicity auth is fire-and-forget.

No response is expected - a rejected auth terminates the connection."""

token = quic_conn.tls.export_keying_material(

label=uuid_bytes,

context=password.encode("utf-8"),

length=32,

)

auth_stream = quic_conn.get_next_available_stream_id(is_unidirectional=True)

auth_data = struct.pack("BB", 0x00, 0x00) + uuid_bytes + token

quic_conn.send_stream_data(auth_stream, auth_data, end_stream=True)

After authentication, TCP tunneling opens a bidirectional QUIC stream with a connect header. Juicity uses its own address type encoding rather than SOCKS5 - 0x00 for domain names, 0x01 for IPv4, 0x02 for IPv6:

1

2

3

4

5

6

7

8

9

10

11

# TCP connect header: [Network (1B)] [address]

# Address format: [type (1B)] [addr (variable)] [port (2B BE)]

NETWORK_TCP = 0x01

ADDR_HOSTNAME, ADDR_IPV4, ADDR_IPV6 = 0x00, 0x01, 0x02

def juicity_connect(quic_conn, host: str, port: int):

"""Open a TCP tunnel through Juicity."""

addr = encode_juicity_address(host, port)

stream_id = quic_conn.get_next_available_stream_id()

quic_conn.send_stream_data(stream_id, struct.pack("B", NETWORK_TCP) + addr)

return stream_id # Bidirectional stream, now ready for proxied data

The practical differences from TUIC boil down to simplicity and ecosystem. Juicity has fewer UDP relay modes (eliminating the native vs quic confusion that plagued TUIC v5), defaults to BBR congestion control, and integrates tightly with the dae/daed routing ecosystem. It also supports certificate chain pinning (pinned_certchain_sha256) for MITM protection with self-signed certificates.

Juicity configuration URLs might look like the below:

1

juicity://uuid-here:[email protected]:443?congestion_control=bbr&sni=example.com#Example

Challenges

Juicity shares TUIC’s detection surface (QUIC throttling, non-HTTP/3 stream patterns, auth-on-unidirectional-stream fingerprint) with the same limitations. The “silent auth” design - where no server response is expected and rejection terminates the QUIC connection - means a censor observing connection establishment could distinguish Juicity from normal HTTP/3 by the absence of the expected H3 SETTINGS and QPACK streams that a real HTTP/3 server sends immediately after handshake completion.

The quic-go library used by Juicity is more mature and stable than TUIC’s quinn, but 0-RTT replay protection still requires careful implementation - the server must reject replayed early data without breaking legitimate 0-RTT resumption.

ShadowTLS

History

ShadowTLS was created by ihciah in August 2022 and has evolved through three versions, each addressing detection vectors exposed by the previous. It occupies an interesting middle ground - it uses a real TLS handshake for camouflage, but unlike Trojan it does not actually use TLS to encrypt the proxied data.

Fundamentals

The approach is clever: the ShadowTLS server proxies a genuine TLS 1.3 handshake to a real upstream server (say, cloud.tencent.com), so any observer or active probe sees a perfectly valid TLS connection with real certificates from a reputable domain. The client actually completes a real TLS handshake with the upstream server through the ShadowTLS proxy - every byte of the handshake is legitimate and verifiable. After the handshake completes, the server switches to a custom HMAC-authenticated framing protocol for actual data transfer.

The version evolution is instructive:

- v1: Simple TLS relay - server proxies a real handshake then switches to raw data. Vulnerable because the abrupt behavioural change after the handshake is detectable.

- v2: Added HMAC authentication on the

ServerRandomto distinguish legitimate clients from probes. Still vulnerable because the post-handshake data lacked integrity tagging. - v3: Added per-frame HMAC tags, enabling the server to distinguish legitimate data frames from probe traffic at the frame level, not just at connection establishment.

Each post-handshake v3 frame is wrapped in a TLS Application Data record header to maintain the illusion:

1

[Content Type: 0x17 (1B)] [Version: 0x0303 (2B)] [Length (2B)] [HMAC (4B)] [Encrypted Payload]

The 4-byte HMAC serves as the authentication mechanism. It is computed over the frame data using a key derived from the pre-shared password and the ServerRandom value captured during the TLS handshake. Since the ServerRandom is unique per connection (it comes from the real upstream server), each session’s HMAC keys are different.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

import hashlib, hmac

def derive_shadow_tls_key(password: str, server_random: bytes) -> bytes:

"""Derive the per-session HMAC key from the password and ServerRandom.

ServerRandom is captured from the real upstream TLS handshake."""

return hmac.new(

password.encode("utf-8"),

server_random,

hashlib.sha256,

).digest()

def tag_frame(key: bytes, data: bytes) -> bytes:

"""Compute 4-byte HMAC tag for a ShadowTLS v3 data frame."""

return hmac.new(key, data, hashlib.sha256).digest()[:4]

The critical active-probe resistance works like this: when a probe connects and completes the TLS handshake, it sees a genuine certificate and a genuine handshake. If it then sends data, the ShadowTLS server reads the first few bytes looking for a valid HMAC tag. If the HMAC doesn’t validate - which it won’t for any probe that doesn’t know the password - the server transparently forwards the data to the real upstream server. The probe gets a genuine TLS error or HTTP response, indistinguishable from connecting to the upstream server directly.

The key distinction from Trojan is architectural. Trojan uses TLS as its actual transport layer - the proxied data genuinely flows through TLS encryption. ShadowTLS uses TLS as a mask; after the handshake, the data is encrypted by the inner proxy protocol (typically ShadowSocks AEAD), and the TLS record framing is purely cosmetic. This means ShadowTLS acts as a transport wrapper that can sit beneath other protocols rather than replacing them.13

ShadowTLS is typically configured server-side rather than via share-link URLs.

Challenges

The version history itself tells the detection story. v1’s abrupt behavioural transition from genuine TLS to raw proxy data was trivially detectable - a middlebox could simply check whether post-handshake bytes conform to TLS Application Data record format (0x17 0x03 0x03 [length]). v2 added connection-level authentication but lacked per-frame integrity, allowing a man-in-the-middle to inject data after the handshake and observe whether the server processed it differently than the upstream would (ShadowSocks AEAD silently drops bad tags, while a real TLS server would send a TLS alert).

v3’s per-frame HMAC addresses the direct exploits, but theoretical vectors remain. The TLS record sizes in ShadowTLS are determined by the inner proxy protocol’s chunk sizes (e.g., ShadowSocks’ 0x3FFF maximum), which may differ from the characteristic size distributions of real HTTPS traffic (web browsing, video streaming, API calls). Real TLS sessions also generate TLS alert records on errors - ShadowTLS’s cosmetic framing never produces these. The upstream server selection matters significantly: it must support TLS 1.3, have similar latency to the ShadowTLS server (to avoid timing discrepancies), be a plausible target for the server’s IP, and have consistent uptime. As of writing, no published research demonstrates successful v3 fingerprinting at scale.

Cloak

History